It is very important to set a value of NPAR explicitly when running MPI-parallel calculations with VASP. VASP’s default choice is NPAR=number of MPI ranks (or cores), which is generally a terrible choice on today’s clusters. You can get at least 30% higher speed by specifying a lower NPAR value in the INCAR. At NSC, we have even seen cases where using NPAR=cores produces incorrect results.

What does NPAR do? You should check the section about NPAR in VASP manual. Basically, it controls how the parallelization over bands is done.

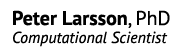

Below is an example from NSC’s Mozart, an SGI Altix 3700 BX2 shared memory machine, running a 24-atom PbSO4 cell:

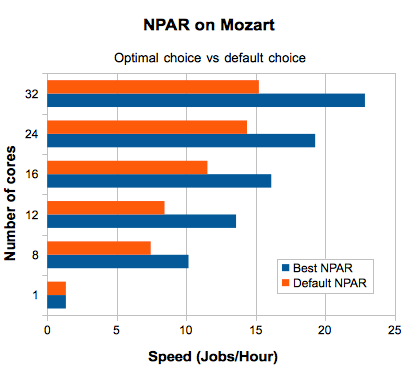

Second example is an FeCo (Wairauite) supercell with 53 atoms on NSC’s Neolith cluster:

In general, the influence of NPAR increases as you run wider parallel jobs. For big jobs, a bad NPAR choice might kill performance. In the example above, you could get the same speed running on 32 cores with good NPAR value, as you could on 64 cores using the default value.

So far, I have made studies of the optimal value of NPAR for the following SNIC resources: Neolith, Mozart, Matter, and PDC’s Lindgren.

Mozart

NSC’s Mozart will soon be obsolete and replaced by new fat nodes in the Kappa cluster, but here are the NPAR recommendations:

- 1 core & NPAR = 1

- 8 cores & NPAR = 2

- 12 cores & NPAR = 3

- 16 cores & NPAR = 2

- 24 cores & NPAR = 4

- 32 cores & NPAR = 4

Neolith & Matter clusters

Neolith and Matter are clusters with compute nodes having dual socket Xeon processors (8 cores in total) connected with Infiniband. I expect the results the apply for other similar systems as well (such as NSC’s Kappa and UPPMAX’s Kalkyl).

The formula is roughly:

NPAR = number of compute nodes / 2.

Some examples:

- 1 node & NPAR = 1

- 2 nodes & NPAR = 1

- 4 nodes & NPAR = 2

- 8 nodes & NPAR = 4

- 16 nodes & NPAR = 8

- 24 nodes & NPAR = 16

- 32 nodes & NPAR = 16

For more than 32 nodes, keep NPAR at 16.

Lindgren (Cray XE6)

The Cray XE6 has a different interconnect, and also fatter nodes with four sockets. The relationship I found there is:

NPAR = number of compute nodes

Note that one compute node corresponds to 24 cores here. So for a 96 core batch job, you would be using 4 nodes and NPAR = 4.

NPAR and NBANDS

Another thing which is confusing about NPAR is that the value of NPAR will influence the number of bands used in your VASP calculation, unless you hard-code the number of bands (NBANDS tag) in the INCAR. The reason being that VASP tries to make sure that there is an equal number of bands on each node, when parallelizing over bands. Thus, one can unknowingly perform calculations with different number of bands when changing the number of cores in the job script. So, once again, set NPAR and NBANDS in the INCAR file. Alternatively, make sure that you have converged your calculation with respect to the number of bands. VASP guesses the number bands with quite a large safety margin, but it is not always enough!